XML Sitemaps Explained (And How to Optimise Them)

Martyn Rance

Martyn Rance

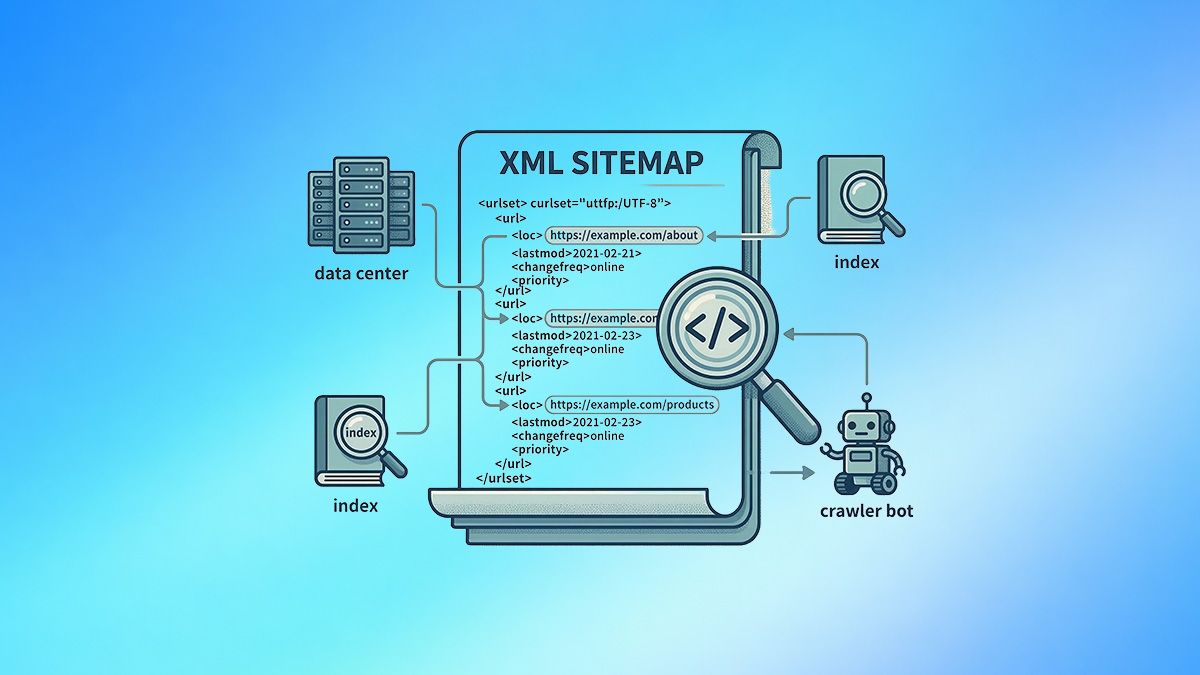

An XML sitemap is a machine-readable file that lists all the important URLs of your website's pages, images, and videos. It acts as a curated roadmap for search engines like Google and Bing, guiding their automated crawlers directly to your most valuable content. While submitting a sitemap is not a direct ranking factor, it facilitates more thorough crawling and indexing, which is the foundational first step toward achieving search visibility.

Why You Need an XML Sitemap (The "Safety Net")

Search engines primarily navigate the web by following internal links from one page to another. However, an XML sitemap serves as a critical safety net to help search engines discover URLs that might be isolated or difficult to reach through your site's standard navigation.

A well-maintained sitemap also alerts search engines to new or recently updated pages, leading to faster indexing compared to waiting for bots to naturally stumble upon the content. It is important to note, however, that an XML sitemap is not a replacement for proper site architecture. Unlike standard contextual links, sitemaps do not pass "link equity" (ranking authority) or topical relevance between pages.

How to Create and Submit Your Sitemap

Most modern Content Management Systems (CMS) and SEO plugins will automatically generate and dynamically update your XML sitemap whenever you publish or alter content. Once generated, this file usually lives in the root directory of your website (e.g., yourdomain.com/sitemap.xml).

To maximize its effectiveness, you should manually submit your sitemap URL to Google Search Console and Bing Webmaster Tools. Submitting your sitemap directly allows you to monitor how search engines view your site, track indexation status, and quickly identify any crawling errors or excluded URLs.

Best Practices for Optimising XML Sitemaps

A broken or inaccurate sitemap can mislead crawlers, waste your site's precious crawl budget, and delay the indexing of your critical content. To keep your sitemaps optimized, follow these core technical rules:

1. Keep It Lean and Focused Your sitemap is a priority list, not an exhaustive inventory of your server. It should only contain the high-value URLs you want search engines to crawl and index. You must actively exclude low-value pages, expired event listings, pages with noindex tags, redirected URLs, and pages returning 404 (Not Found) or 410 (Gone) server errors.

2. Include Only Canonical URLs To prevent search engines from wasting resources evaluating duplicate content, ensure your sitemap only lists the preferred, "canonical" versions of your pages. The URLs provided in your sitemap must perfectly match the canonical tags declared on those actual pages.

3. Maintain Accurate <lastmod> Dates The <lastmod> (last modified) tag is a vital signal within the XML file that tells search engines exactly when a page was last updated. Maintaining accurate <lastmod> dates signals content freshness and encourages Google's systems to recrawl the page to pick up your latest changes.

4. Respect Strict Size Limits Search engines enforce hard limits on sitemap files to prevent overloading. A single XML sitemap file can contain a maximum of 50,000 URLs and must not exceed 50MB when uncompressed. If your site exceeds these limits, you must split your URLs across multiple sitemap files and use a sitemap index file to group them together.

5. Split Sitemaps by Content Type For large sites, it is best practice to organize your sitemaps by content type, section, or date. Segmenting your sitemaps makes it significantly easier to manage them and isolate indexation errors when debugging in Google Search Console.

6. Use Compression (But Understand Its Limits) You can use gzip compression to make your sitemap files download faster for bots. However, it is a common SEO myth that compressing sitemaps will actively save or increase your crawl budget. Search engine bots still need to fetch and unpack the zipped files from your server, so the overall crawl resource expenditure remains largely the same.

By maintaining strict sitemap hygiene and ensuring your files perfectly reflect your live, high-priority pages, you remove crawler roadblocks and create a frictionless path for search engines to index your website's best assets.